Prototyping AR on the Web

In the spring of 2017, Google's Daydream WebXR team wanted to explore building AR experiences on the web. We had a handful of technical challenges that needed to be solved, like integrating an AR platform with a web browser, and bikeshedding an API that could leverage these AR features, while remaining "webby".

We also wanted to discover what new experiences could be built if web developers had access to AR, gather feedback, and get web devs excited about spatial computing. At the time, AR on the web was limited to marker-based localization (AR.js), and bringing 6DOF tracking and scene understanding to web content would unlock many new possibilities.

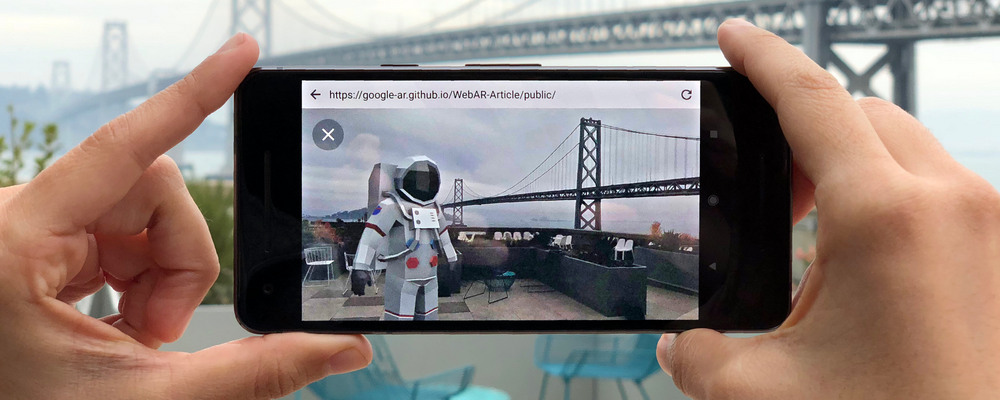

View of an augmented reality model from ARticle, powered by Daydream WebXR's AR browsers.

We began building off of Iker Jamardo's earlier work on exposing AR features in Chromium on top of the Tango AR platform, leveraging infrared cameras on mobile devices. The result was a special build of Chromium that contained additional APIs exposed to web content.

AR Platform

The baseline for any AR experience on a mobile device requires a few capabilities:

- Tracking the device's position and orientation in real-world space (6DOF).

- Rendering the camera to overlay 3D content. While this was already possible via

getUserMedia, there were several additional complexities, like mobile devices disallowing multiple apps (e.g. a browser app and an AR platform app) using the camera concurrently, as well as exposing device-specific camera intrinsics in order to produce an accurate projection matrix. - Understanding the real world. In order to enable interaction with the world, there must exist a way to identify objects in the camera feed. AR platforms are constantly improving in this area with APIs for plane detection, mesh reconstruction, light estimation, and point clouds.

- Ability to "anchor" locations in 3D, necessary to counteract drift, refining over the course of an AR session as scene understanding improves (ARKit ARAnchors, ARCore Anchors).

Early Prototypes

As we built out the platform, the earlier prototypes focused on implementing and testing new features, and iterating over our own abstractions and helper libraries to make it as simple as possible to build anything with AR. Very much early tech demos.

The capabilities of the Tango platform and its infrared camera felt powerful and fun, allowing us to have rather accurate hit testing, as well as explore realtime point cloud data, used for collision and rough occlusion.

Our browser and examples were open sourced, and we had interest from developers, but unfortunately there were very few who had access to Tango devices. Luckily, the next iteration was more accessible.

WebARonARCore and WebARonARKit

The release of Apple's ARKit and Google's ARCore in the summer of 2017 brought augmented reality SDKs to mobile developers, enabling the creation of AR experiences for millions of Android and iOS devices.

We wanted to bring the same capabilities to web developers, and coinciding with ARCore's launch, we released new experimental browsers that implemented augmented reality APIs on the web.

Taking our lessons learned from prototyping on our Tango-powered build of Chromium, we refined our API, an extension of the WebVR 1.1 API with augmented reality features (IDL). We built two new browsers, WebARonARKit for iOS and WebARonARCore for Android, and updated our Tango browser as WebARonTango. With names that only a lawyer could love, all 3 browsers implemented an interoperable API, enabling cross-platform experiences.

We also created three.ar.js, a JavaScript library that extends the popular 3D framework three.js, to make it easier to develop AR content using the tools that 3D web developers are already familiar with.

Shortly after the release of WebARonARCore and WebARonARKit, I shared some of our prototypes and ideas in "The future of virtual and augmented reality for the web" at Coldfront 2017.

WebARonTango

The ARCore and ARKit platforms use the standard, ubiquitous RGB camera on mobile devices, while Tango devices used both RGB and IR cameras, allowing WebARonTango to implement features that required infrared depth sensing, like point cloud data or ADFs. Shortly after the ARCore launch, Tango was discontinued in favor of ARCore, and as of writing, both ARKit and ARCore implement similar functionality via feature points and point clouds.

As powerful as Tango's infrared camera was, there were only a few devices that were supported. During the launch of these browsers, 500 million devices supported ARCore and ARKit, allowing us to reach many more developers, able to build AR web content with a device they may already own.

Response

We had a lot of positive responses from web developers and the Immersive Web community, as we continued building the prototypes in the open. Mozilla experimented with their own AR platform that supported their own iOS WebXR Viewer, as well as WebARonARCore and WebARonARKit. An A-Frame extension was created for developing AR experiences on our AR stack using the popular WebVR framework A-Frame. As the underlying AR platform evolved, so did our browsers and tools, like adding surface detection.

Over the next few months, we kept iterating on our public experiments and internal prototypes. We leaned more into what the web can uniquely do in this space, and continually refined our own dogfooding tools. Reza Ali and Josh Carpenter summarized developing on our stack, sharing an AR experience embedded in web content in "Augmented reality on the web, for everyone", garnering excitement from the press.

Proprietary JavaScript SLAM-based solutions (e.g. 8th Wall) were still a few years away, and the standardization of the WebXR Device API for AR is nearing an implemented and stable state for early 2020. As one of the earliest platforms for web augmented reality, our stack of AR browsers and tools were the foundation of Daydream WebXR's prototypes and later projects.

Press

- Google Blog: Augmented reality on the web, for everyone

- The Verge: Google is working on bringing AR to Chrome with downloadable 3D objects

- VentureBeat: Google's web AR announcement is a boon for advertisers

- VRScout: Google's Experiment With Web-Based Augmented Reality

- Next Reality: Google AR Prototype Enables 3D Model Viewing Through Web Browsers